Why we do

Digital public participation has become a fixed format in democratic societies. Although early, comprehensive, and transparent involvement is key for successful planning processes, there are only a few encouraging experiments of multimedia participation approaches. Existing ones are often not tailored to the needs of the citizens. The application of different forms of representations, such as thematic maps, diagrams, aerial photographs, videos, and animations, so far, is only used isolated. The project's overall research issue is whether current multiuser and multimedia techniques may enhance the efficacy and efficiency of participatory planning procedures. In particular, the systematic combination of visualization types is examined for three participation settings (off-site, on-site, online) and the target devices used.

In the long term, PaKOMM contributes to more effective and efficient decision-making in collaborative participation processes.

What we do

We develop mixed media tools that stakeholders can utilize for co-creative planning:

Touch Table

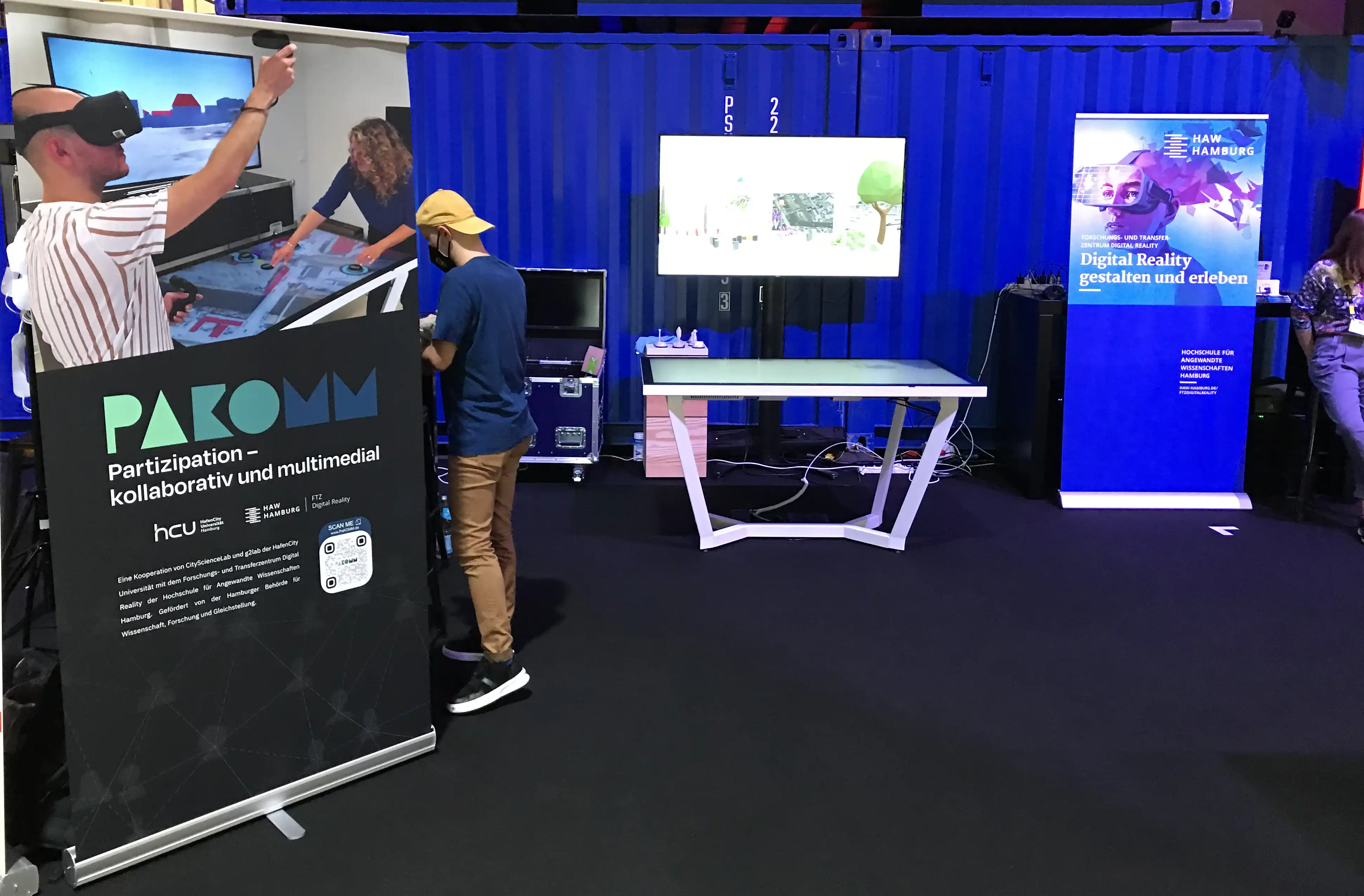

At a touch table, individuals or groups can view and modify plans collaboratively.

VR App

With VR glasses, these plans can be viewed simultaneously or subsequently in 3D and the planning can be changed again if necessary.

AR App

With an AR app, plans can be reviewed on site and, if necessary, changed and commented again.

Tablet App

With a tablet app, individuals can plan on the go at the same level as at the touch table.

How we do

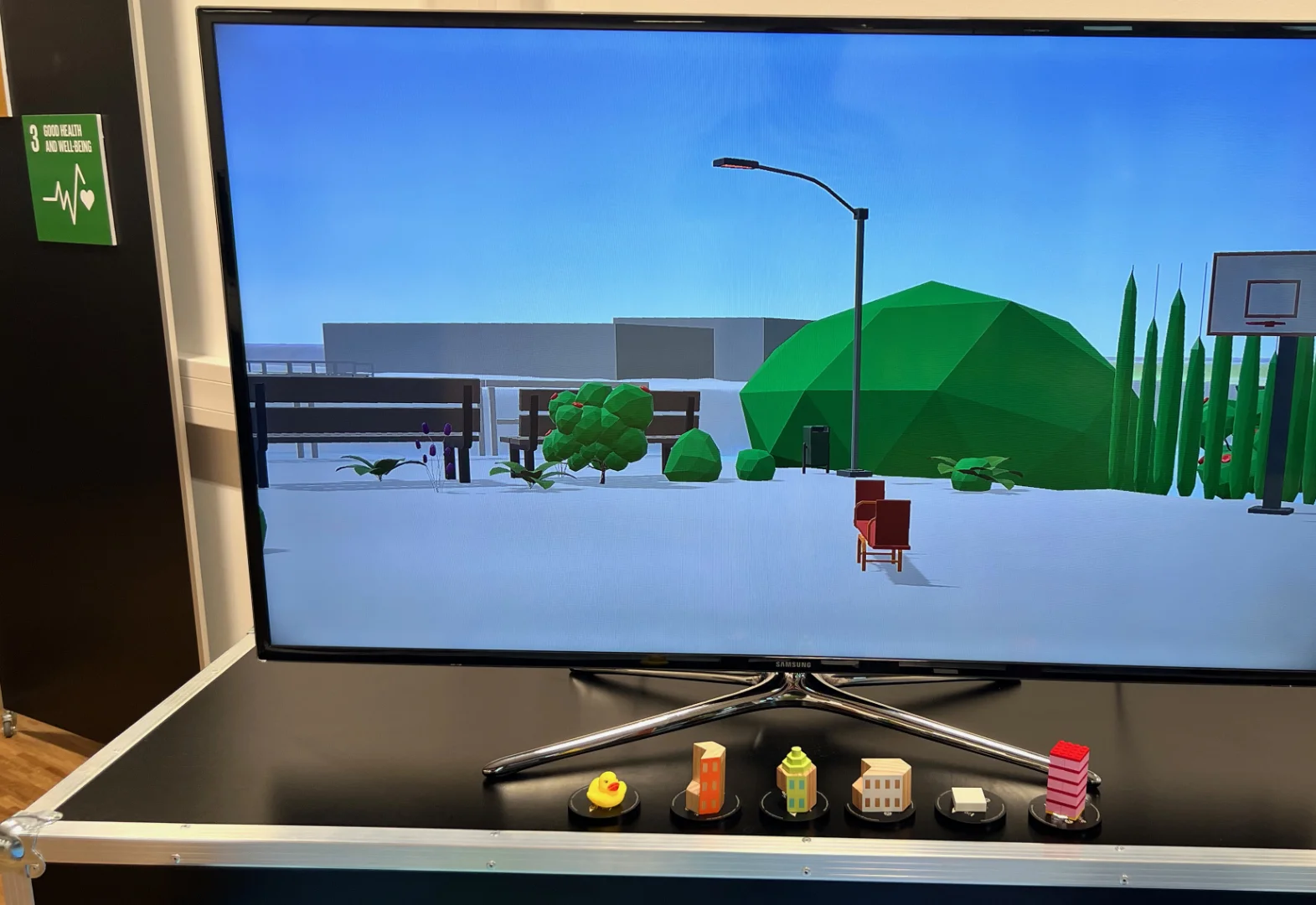

To work on planning scenarios in larger groups, we use a 65" UHD touch table with marker recognition. Markers can be placed anywhere on the touch table and allow additional interaction beyond traditional touch input. A display mounted above the touch table completes the stationary setup and allows interactive exploration of the plans from user-selectable bird and pedestrian perspectives.

To design and experience planning versions immersively, we use virtual reality headsets. A controller-based input concept enables a multitude of interactions. Also in the virtual environment, the planning process is co-creative: Participants are networked with each other while wearing the headset and see each other in the form of an avatar and can communicate with each other linguistically and design plans together. Handwritten annotations can be created directly in the virtual environment.

In the real, built environment, planning scenarios can be created, viewed and commented on via an AR app. For an optimal experience, we rely on tablets with LiDAR scanners and on the recognition of feature points in the environment (Visual Positioning) to most precisely enrich the real world with virtual elements. The application will be finalized shortly.

To address mobile and other scenarios where stakeholders cannot rely on the big touch table, we developed a tablet app. Moreover, this app allows the extension with an external display via USB-C for viewing planning in the additional user-selectable bird and pedestrian perspectives. Thus, the planning experience is at the same level as on the touch table.

Meet the team

This joint research project combines the expertise of the Lab for Geoinformatics and Geovisualization (g2lab) [lead], the City Science Lab (CSL) of HafenCity University Hamburg as well as the FTZ Digital Reality (FTZ Digital Reality) of Hamburg University of Applied Sciences.

Prof. Dr.-Ing. Jochen Schiewe

Prof. Dr. phil. Gesa Ziemer

Prof. Dr.-Ing. Roland Greule

Patrick Postert

Dr. phil. Heike Lüken

Anna E. M. Wolf

Dr. phil. Hilke Berger

Imanuel Schipper

The project is funded by the Hamburg Ministry of Science, Research and Equality (BWFG).

Contact

Want to demo the apps?

Address

Heike Lüken

Hongkongstraße 8

20457 Hamburg

Germany

Phone

+49 (0)40 – 42827-5621